Touching the screen and physics

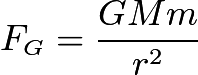

A hand touching the screen is supposed to simulate the placement of a gravity source at the location of the hand. This means that every particle is going to be affected by a certain force depending on its position relative to the hand touching the screen. All the calculations are based on Newton's law of universal gravitation, in order to get real physics behavior. The following formula can be used to describe the force which affects each particle. G is a gravitational constant, M and m are the masses of the two different objects, i.e. the gravity source (a touching hand) and a particle. r is the distance between these two. All these variables result in the force Fg.

Touching the screen with one hand will thus add a force directed from each particle towards the position of the hand. This will alter the velocity and direction in which the particle was previously heading, for each rendered frame. Particles which are far away from the hand will not be affected nearly as much as those closer to the hand, due to the division by the distance squared.

Once there is more than one hand touching the screen, this calculation has to be performed for each hand, on all the particles. The forces can then be sumarized to get the final force to change each particle's direction and velocity. Hence, there are a ton of calculations being done in order to render each frame, which is one of the main reasons we wanted to use the GPU in the first place.

One thing to note here is that the particles do not add any force to all the other particles in the scene, even though the formula states that they clearly would. However we did not like that idea, and only wanted the user interaction to be what changes the state of the scene. To further motivate why the particles do not affect each other it could simply be for the reason that their masses are too insignificant to result in any force big enough to be worth taking into account.

Restrictions

A couple of problems occured while implementing everything mentioned above.

- What happens if a particle reaches one of the screen's edges?

- What behavior do we need to implement for those cases?

- Do we need a bounding box?

- Should all edges have the same behavior?

The first thing we realized is that we have to set a bounding box. There is no point of having particles outside of the visible parts of the scene, and if a particle would travel too far away, it would be very difficult to pull it back into frame due to the large distance it could build up.

Particles without a bounding box

Thus, we set limitations for all edges. Any particle exceeding the limit would have its position capped and its velocity set to zero depending on which axis it would try to exceed. For instance, if a particle is trying to escape on the left side of the screen, i.e. on the negative side of the x-axis, it will have its x-velocity set to zero, while the y-velocity remains the same. Thus the particle will slide along the edge of the screen until it reaches a corner, where the same thing would happen to the y-velocity.

This means particles trying to exit the scene stick onto the "walls" and corners. When being attracted back towards the center of the screen, the particles form cool looking shapes which we thought was an amazing feature.

Stopping particles which go too far

One exception is the upper edge of the screen. In this case we did not want the particles to stick to the edge, because it is hard to reach the top of the screen due to its height. Thus those particles stuck at the top are having a hard time getting back into the action. Therefore, we set the top edge to bounce the particles back down, simply by reversing the y-velocity and scaling it down by a factor 10 in order to maintain smooth movement and prevent chaos.

Bouncing particles back from the wall

Pacticles appear at the other side (experimental feature, not implemented!)

Sticky walls and bouncy top (final version)

Inga kommentarer:

Skicka en kommentar